Philosopher Carole Lee had been researching the problem of bias in academic peer review for several years when the National Institutes for Health (NIH) announced a competition on that very topic. Through an unusual collaboration, she crafted a proposal with statistician Elena Erosheva, earning first place in the creativity category of the competition.

Peer review, for the uninitiated, is the process by which research grants are awarded. Colleagues from across the country rate proposals on criteria such as innovation, soundness of approach, and institutional support. Given that these ratings determine which projects receive funding, sometimes in the millions of dollars, the implications of bias can be far-reaching.

“Reading literature on bias in peer review, I became fascinated by all the ways in which we are cognitively and socially biased—against other researchers, against certain types of research,” says Lee, assistant professor of philosophy. “I started thinking about the ramifications those biases might have, not just for my own career but for knowledge communities more generally.”

A 2011 paper in Science brought the bias problem to the forefront,demonstrating that Black investigators are ten percent less likely than White investigators to receive major NIH research grants, even controlling for factors like education, past training, and productivity. The Center for Scientific Review at NIH hosted the competition to identify new ways of measuring bias in peer review, with the goal of potentially revising their review process.

Lee knew she had a strong empirical and theoretical argument for her submission, but she needed statistical tools for measuring her new concept of bias. She contacted Erosheva, associate professor of statistics and social work, who had provided statistics expertise to Lee in the past as director of consulting in the Center for Statistics and the Social Sciences (CSSS). “In the literature on bias in peer review, it’s not uncommon for researchers to disagree about whether bias exists or not—even when dealing with the same data set—because they disagree about which statistical methods are the most appropriate to use. I invited Elena to join because I was confident she had the expertise to identify and justify the best study design,” recalls Lee. “I was pleased and grateful that she accepted my invitation even though I came to her just six weeks before the competition deadline.”

I think it takes an open mind and a deep understanding of a problem—together with the courage of admitting one’s own limitations—to invite a statistician on an intellectual journey.

Erosheva was intrigued by both the project and the collaboration. “This type of statistics-philosophy collaboration is unusual,” she says. “And it is rather unusual for a true collaboration to arise from statistical consulting. This is the first time it has happened in my six-plus years of being the director of statistical consulting at CSSS. I think it takes an open mind and a deep understanding of a problem—together with the courage of admitting one’s own limitations—to invite a statistician on an intellectual journey.” The CSSS has been providing free statistical consulting since its inception in 1999, advising faculty, staff, and students from dozens of UW social science units at all stages of their research projects.

In their submission, Lee and Erosheva first noted how the current peer review process invites bias. Peer reviewers rate grant proposals along individual criteria, but then assign whatever overall rating they want, favoring whichever criteria they choose to favor. Studies have shown that individual reviewers, given identical submissions, weigh criteria differently depending on their own biases. Erosheva, who has a long-standing interest in multivariate data analysis, came up with a sophisticated approach that looks at a number of variables in weighing criteria and tries to make sense of the patterns.

As winners of the creativity category of the competition (one of three categories), Lee and Erosheva are now hoping to see some of their suggestions realized. “We contacted the director [of the Center for Scientific Review at NIH] to see whether we might be able to work in collaboration with them, and he’s setting up a phone call to discuss how we might be able to contribute,” says Lee. “It’s meaningful to me, particularly as a minority female faculty member, to think that I might be able to contribute to this conversation.”

More Stories

Before Med School, A Year in Paris

Graduating with bachelor's degrees in neuroscience and French, Hunter Jung is heading to France for a cognitive neuroscience program that reflects both interests.

Supporting a Threatened Language

For his UW master's in Scandinavian Studies, Estonian student Greg Rahuoja addressed political and practical challenges for Khanty, an Indigenous language spoken in parts of Siberia.

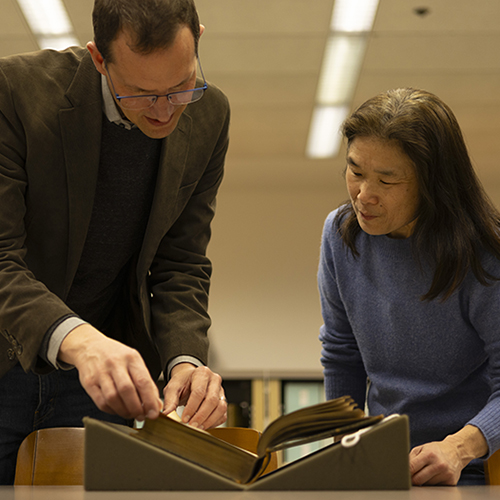

Sharing Shakespeare

Thanks to a School of Drama connection, an 19th-century illustrated Shakespeare edition with an interesting backstory is now part of UW Libraries Special Collections.